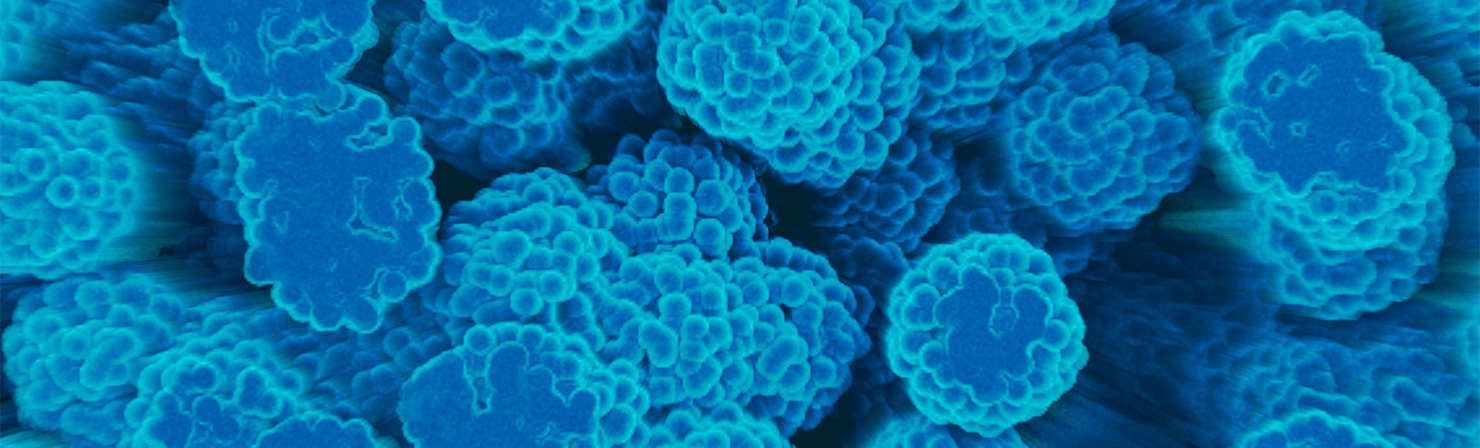

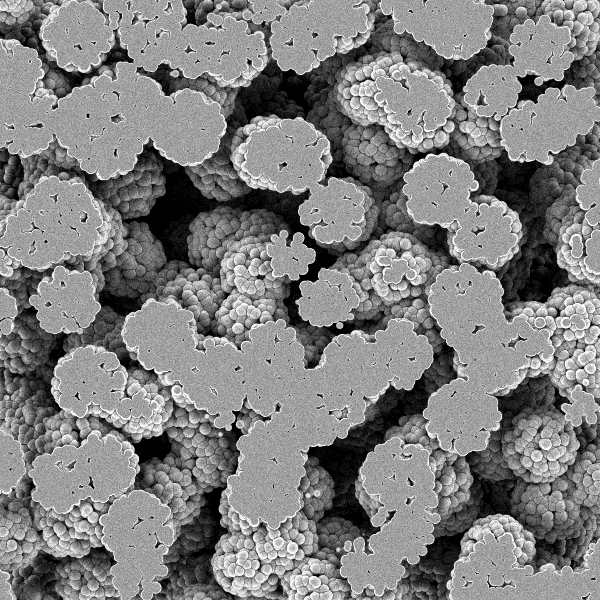

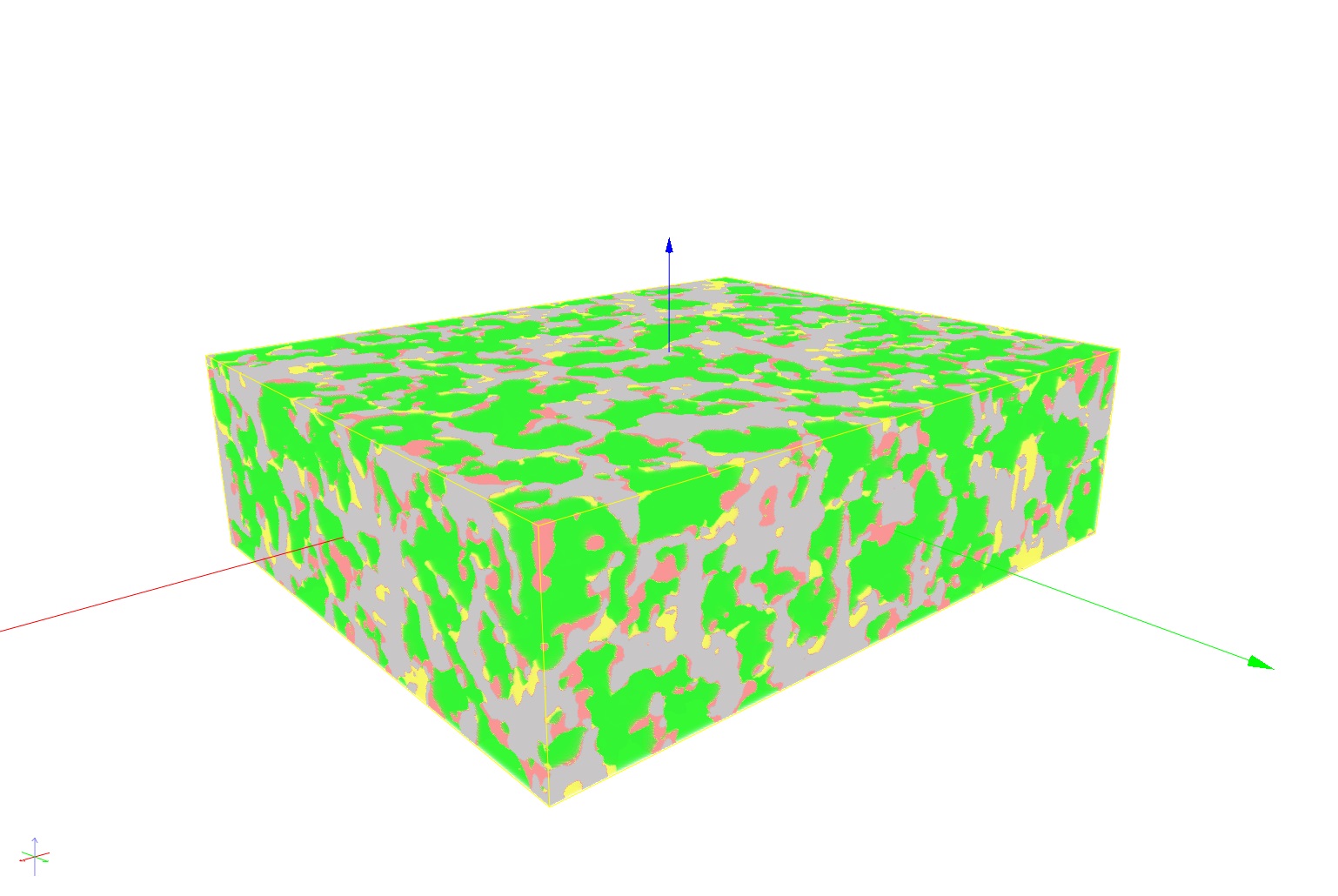

Modern materials such as gas diffusion layers for fuel cells, electrodes for lithium-ion batteries, filter media or ceramic materials with active components have complex, multiscale structures that highly influence the macroscopic material behavior. 3D images of the structures provide a deeper understanding of how structure and properties are related. We contribute to this understanding with new deep learning methods.

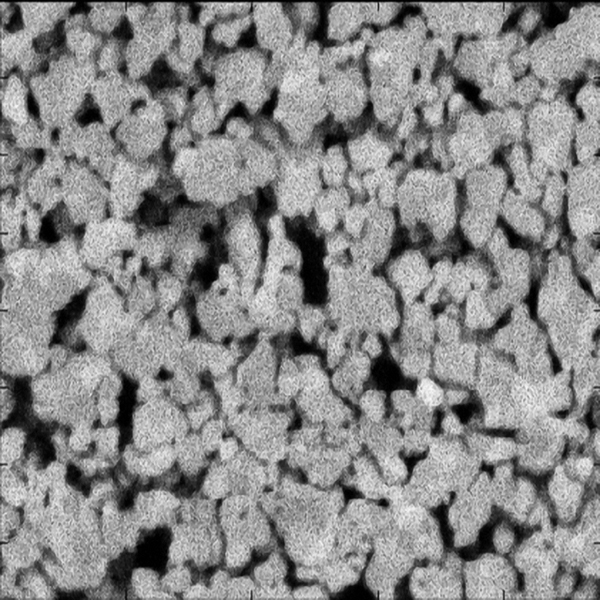

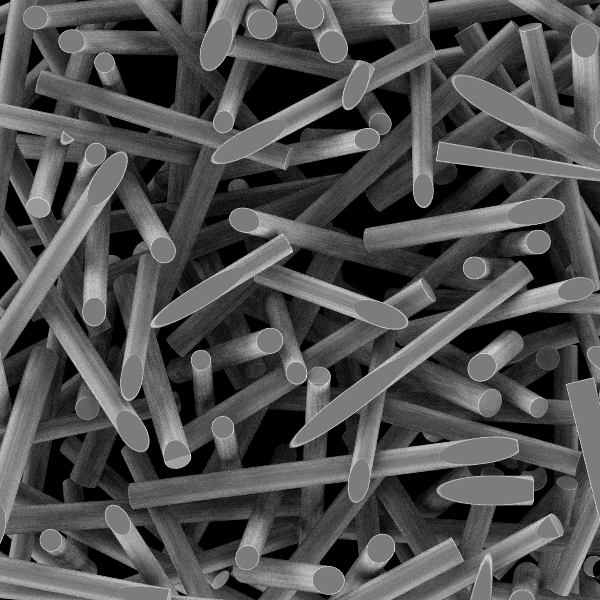

On the nanoscale, structures in the 5-100nm range can be imaged three-dimensionally using the FIB-SEM serial sectioning technique. Using a Focused Ion Beam (FIB), the structure of interest is precisely cut and the cut surface is then imaged using a Scanning Electron Microscope (SEM). The surface is further ablated, and the cut surface is imaged again. Several hundred sectional images result in a volume data set.